The landscape of artificial intelligence infrastructure shifted seismically at CES 2026 with Nvidia’s unveiling of the Vera Rubin architecture. Named after the trailblazing astronomer Vera Rubin, whose work confirmed the existence of dark matter, this new platform represents a significant shift from the chip-centric designs of the past. While industry observers and enthusiasts had colloquially anticipated an “H300” successor to the Hopper line, Nvidia has leapfrogged that nomenclature entirely, introducing a unified “rack-scale” system designed not just to train models, but to power the next generation of “agentic” AI—autonomous systems capable of complex reasoning and multi-step tasks.

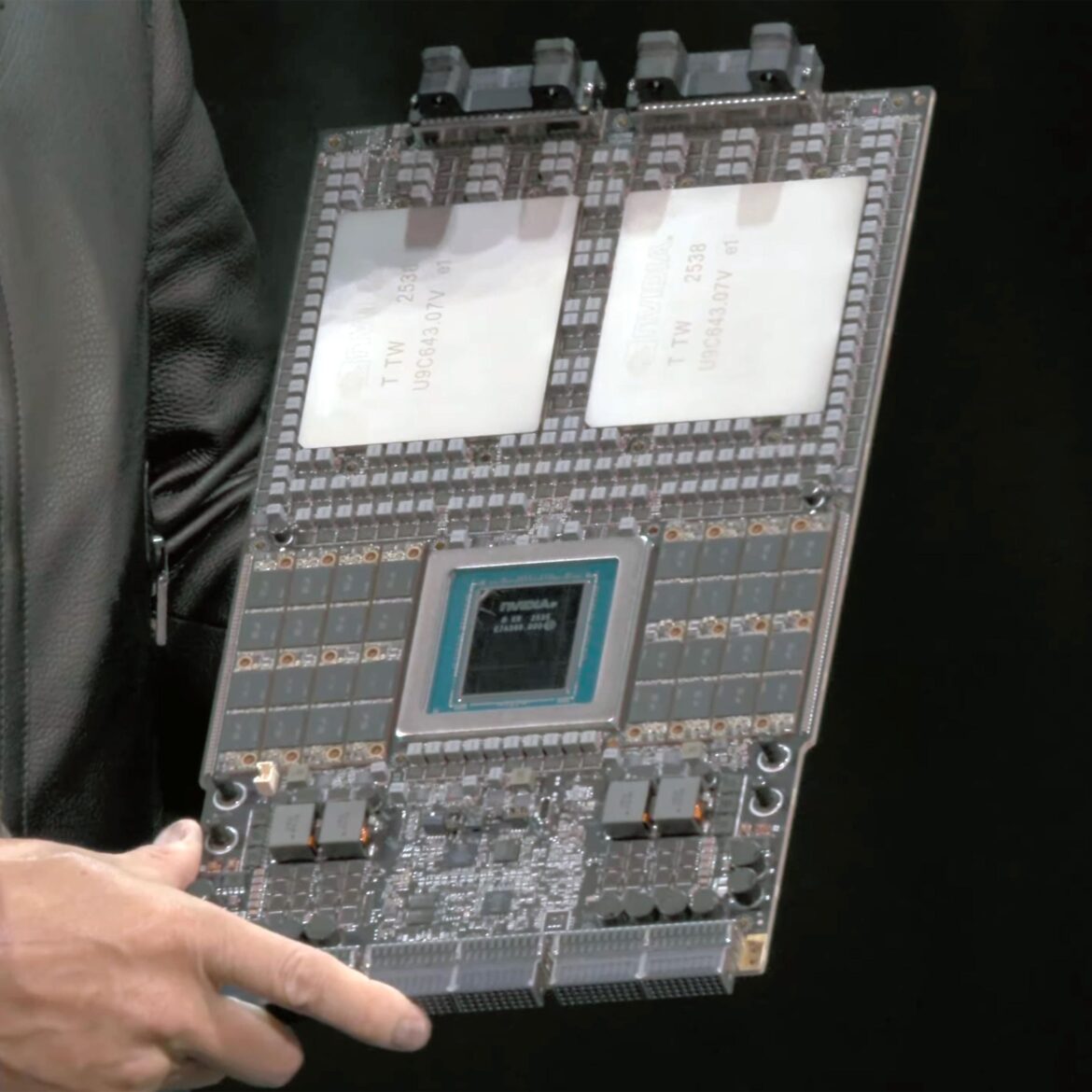

The centerpiece of this release is the Rubin GPU, a processor that fundamentally rethinks the relationship between memory and compute. For years, the AI industry has faced a “memory wall”—a bottleneck where processors could calculate faster than they could receive data. The Rubin GPU is designed to mitigate this barrier by integrating HBM4 (High Bandwidth Memory 4), a cutting-edge memory standard that delivers a staggering 22 terabytes per second of bandwidth. This is roughly triple the speed of the previous generation, allowing the GPU to feed its computational cores with the massive datasets. For professionals, this means the latency in generating tokens—the basic units of AI text and code—drops precipitously, while for the broader market, it promises significantly faster and cheaper AI interactions.

Complementing the GPU is the new “Vera” CPU, a custom Arm-based processor featuring 88 “Olympus” cores. Unlike traditional central processing units found in consumer laptops or standard servers, Vera is purpose-built to manage the immense data flow of an “AI factory.” It introduces a concept called Spatial Multithreading, which physically partitions the core’s resources to handle multiple tasks simultaneously without the performance penalties usually associated with multitasking. This pairing of the Vera CPU and Rubin GPU creates a “superchip” architecture that treats the entire data center rack as a single computing unit, rather than a collection of disparate parts.

Nvidia’s strategy with the Vera Rubin platform focuses heavily on the NVL72 rack-scale system. This behemoth connects 72 Rubin GPUs and 36 Vera CPUs into a singular, liquid-cooled machine linked by the sixth generation of NVLink. This interconnect technology allows all 72 GPUs to act as one giant brain, sharing memory and data at speeds that make physical distance between chips negligible. This architecture is specifically engineered for Mixture-of-Experts (MoE) models—a sophisticated AI design where a model is broken into smaller “expert” sub-models. By allowing these experts to communicate instantly across the entire rack, Nvidia has reduced the cost of running these massive models by an estimated significantly compared to the previous Blackwell architecture.

The significance of this release extends far beyond raw speed specifications. The industry is currently transitioning from an era of “training”—teaching AI models—to an era of “inference” and “reasoning,” where models must actively think, plan, and execute tasks in the real world. This shift demands infrastructure that is not only powerful but also economically viable for continuous operation. The Rubin platform’s ability to reduce the energy and financial cost per token is a direct response to this market need, enabling companies to deploy autonomous AI agents that can work for extended periods without incurring prohibitive costs.

From a manufacturing perspective, the Rubin architecture relies on an intricate supply chain. The chips are fabricated using TSMC’s advanced 3nm process nodes, pushing the limits of transistor density. The integration of HBM4 memory also highlights the critical role of memory partners like SK Hynix, Samsung, and Micron, who have had to innovate rapidly to meet Nvidia’s stringent thermal and speed requirements. This interdependence underscores a “state of the industry” where success is defined not just by silicon design, but by the ability to orchestrate a global network of advanced manufacturing and packaging technologies.

The introduction of the Vera Rubin architecture also widens the moat between Nvidia and its competitors. While rivals like AMD and Intel are making strides with their own accelerators, Nvidia’s move to a full-stack approach—controlling everything from the CPU and GPU to the networking switches and cabling—makes it increasingly difficult for data centers to mix and match components. By offering a fully integrated “AI factory in a rack,” Nvidia is essentially selling a turnkey supercomputer, solidifying its dominance in the cloud infrastructure of major players like Microsoft Azure, AWS, and Google Cloud.

The extreme density of the NVL72 system means that data centers can achieve higher performance within a smaller physical footprint, but it also necessitates advanced liquid cooling solutions. The shift from air-cooled servers to liquid-cooled racks is now effectively mandatory for top-tier AI facilities, marking a major step in how data centers are designed and built. The Vera Rubin platform’s focus on energy efficiency per token generated aims to balance the insatiable energy appetite of AI with the practical constraints of global power grids.

Ultimately, the Vera Rubin architecture serves as a blueprint for the next half-decade of artificial intelligence. It signals that the era of the standalone GPU is ending, replaced by the era of the system-level supercomputer. For the “AI amateur,” this means that the applications they use—from personalized assistants to automated video generators—will become smarter, faster, and more capable of understanding context. For the professional, it represents a new standard of compute density and efficiency that will define the economics of the intelligence revolution.

As mass production ramps up in late 2026, the industry is bracing for the “Rubin cycle,” a period likely to be characterized by the rapid obsolescence of older hardware and a scramble to secure these new rack-scale systems. Nvidia has once again reset the clock, proving that in the race for artificial general intelligence, the hardware that powers the mind is just as important as the algorithms that define it.